Video tracking is an important task in many automated or semi-automated applications, like cinematic post production, surveillance or traffic monitoring. Most established video tracking methods fail or lead to an inaccurate estimate when motion blur occurs in the video, as they assume, that the object appears constantly sharp in the video. We developed a novel motion tracking method with explicit modeling of motion blur, estimating the continuous motion of a rigid 3-D object with known geometry in a monocular video as well as the sharp object texture. Instead of treating motion blur as a potential source of errors, we take advantage of it and consider motion blur as an additional information source, providing information about the motion of the tracked object during the exposure.

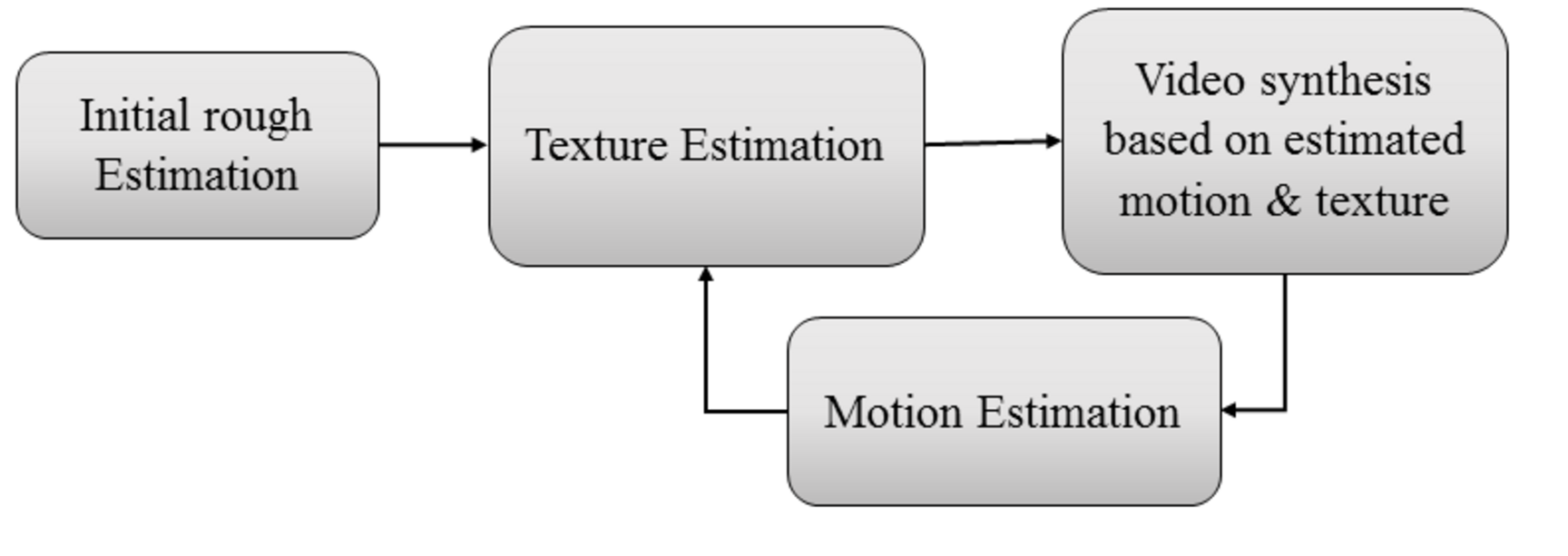

The figure above shows the structure of our developed method. It starts with an initial rough estimation that can be guided by a user, if the frames are extremely blurred and other methods fail. Using the initial motion estimate, the objects sharp texture is estimated. The texture that is estimated at this step usually lacks of high frequency details and has several artifacts - both caused by the inaccurate motion estimate - but is still suitable for our approach. In the next step, we create a synthetic video based on the estimated motion and texture taking the motion during the frame into account. Finally, the motion is estimated by reducing the difference between the synthetic frames and the captured ones. Using the improved motion estimate, the texture is estimated again and this texture is used to refine the motion estimate again. These steps are repeated until the estimation converges, which took only one to two iterations in our experiments.

We designed our algorithm to be capable to run in parallel on the GPU using the common rendering pipeline and considering each frame individually to handle long videos as well. Our texture estimation method is based on a numerical solver for huge sparse systems of equations and thus even 4k videos can be processed in a reasonable time without expensive hardware.

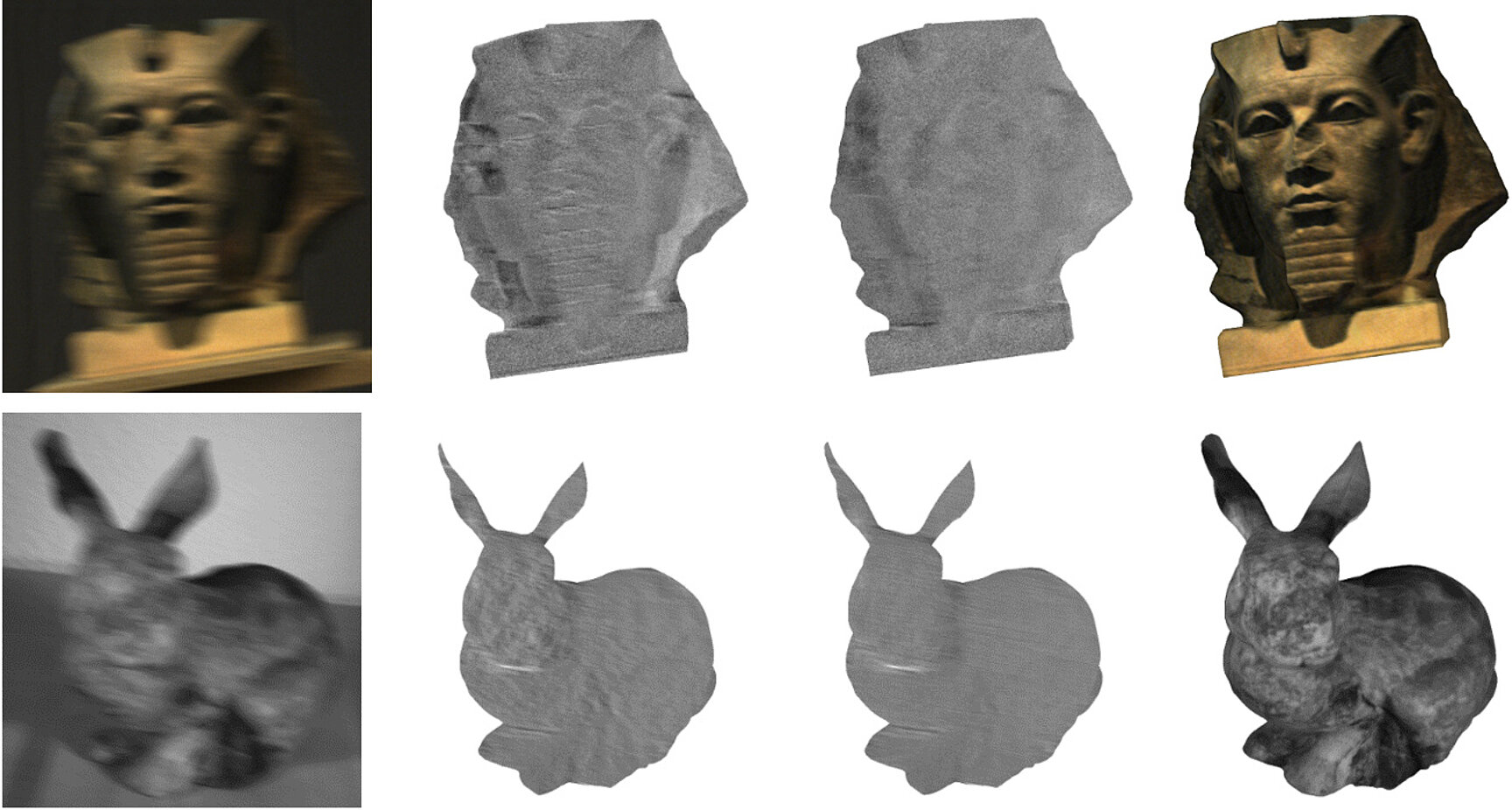

We tested our approach on synthetic and real videos. A special setup was designed for quantitative evaluation of our method on real videos. This setup captures stereo videos with a different exposure time for each camera and thus captures the same dynamic scene sharp and blurred at the same time. We used the sharp images to obtain a ground truth motion. Using our approach, we significantly improved the accuracy of the estimated motion compared to motion tracking methods that does not consider motion blur. The videos that were captured with the described setup are available here.

Publication

C. Seibold, A. Hilsmann, P. Eisert

Model-based motion blur estimation for the improvement of motion tracking, Computer Vision and Image Understanding, vol 160, pp 45-56, July 2017, ISSN 1077-3142.