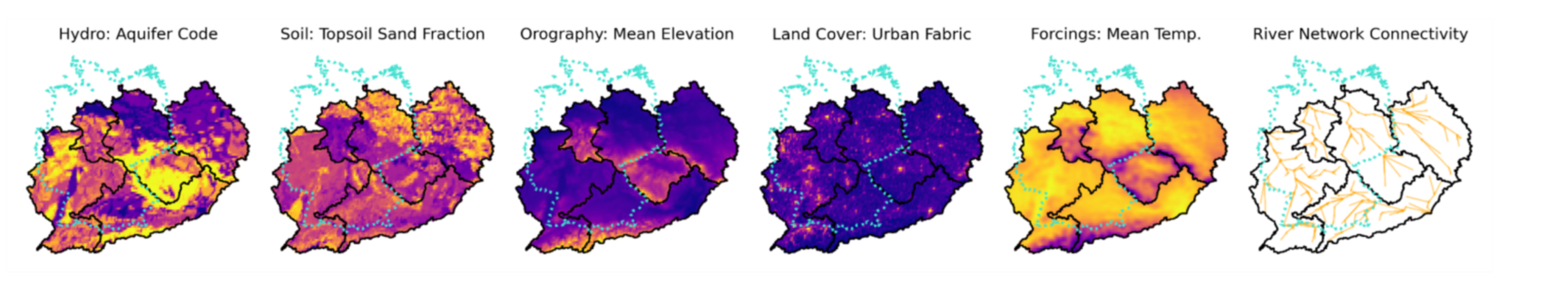

Multimodal Time Series and Maps

Time series and map data from a variety of sources can be flexibly combined to feed a neural network. The network automatically learns to select relevant information and combine it to gain multimodal insights - without the need for human design and control. Our neural network pipelines can process large amounts of data and can thus leverage the richness and diversity of the modern data ecosystem effectively, such as satellite-based remote sensing and sensor networks.

Integration of Epidemiology and AI

Furthermore, HHI explores innovative approaches for integrating historical epidemiological insights into modern data-driven methods. We use partial differential equations to systematically embed proven epidemiological principles into AI models.

In our current research work from 2025 [1], we demonstrated a groundbreaking approach: Using epidemiological differential equations, we generated synthetic training data for our forecasting models. The results show a significant performance improvement over purely data-based methods.

The differential equation we developed models both the temporal evolution and spatial diffusion of infected persons, thus creating a realistic foundation for more precise predictions.

Multimodal Medical AI for Robust and Trustworthy Decision-Making

Modern clinical decision-making increasingly benefits from multimodal artificial intelligence (AI) systems that integrate diverse sources of information—such as medical images, clinical text, and structured patient data—to deliver more accurate, interpretable, and trustworthy predictions. However, in real-world healthcare settings, not all data modalities are consistently available at inference time, posing a major challenge for deploying such models in practice.

To address this limitation, we developed Multimodal Privileged Knowledge Distillation (MMPKD), a training strategy that leverages additional modalities available only during training to guide a unimodal model at test time. In our work, we used a text-based teacher model for chest radiographs (MIMIC-CXR) and a tabular metadata-based teacher model for mammography (CBIS-DDSM) to distil knowledge into a vision transformer student model. This approach improved the student model’s zero-shot ability to localize regions of interest in medical images, demonstrating the potential of multimodal distillation for enhancing model interpretability and robustness—even when only a single modality is available at deployment.

Publications

Baur, S., Benova, A., Cantú, E. D., & Ma, J. (2025). On the effectiveness of multimodal privileged knowledge distillation in two vision transformer based diagnostic applications. arXiv preprint arXiv:2508.06558. https://arxiv.org/abs/2508.06558

Cheng, X., Vischer, M., Schellin, Z., Arras, L., Kuglitsch, M. M., Samek, W., & Ma, J. (2023). Explainability in geoAI. In Handbook of Geospatial Artificial Intelligence (pp. 177-200). CRC Press. https://doi.org/10.1201/9781003308423

Vischer, M. A., Otero, N., & Ma, J. (2025). Spatially Resolved Rainfall Streamflow Modeling in Central Europe. EGUsphere, 2025, 1-26. https://doi.org/10.5194/egusphere-2024-3649

Vischer, M. A., Otero, N., & Ma, J. (2025). Spatially Resolved Meteorological and Ancillary Data in Central Europe for Rainfall Streamflow Modeling. arXiv preprint arXiv:2506.03819. https://arxiv.org/abs/2506.03819