We developed a system for the semi-automated segmentation of frontal human head portraits from arbitrary unknown backgrounds. An initial cutout is computed without any user interaction by exploiting information from a face feature detector, image-deduced color models, and a learned parametric head shape model as prior information. If necessary, precise corrections can be applied in an optional refinement step with minimal user interaction.

Unsupervised Shape-Constrained Segmentation

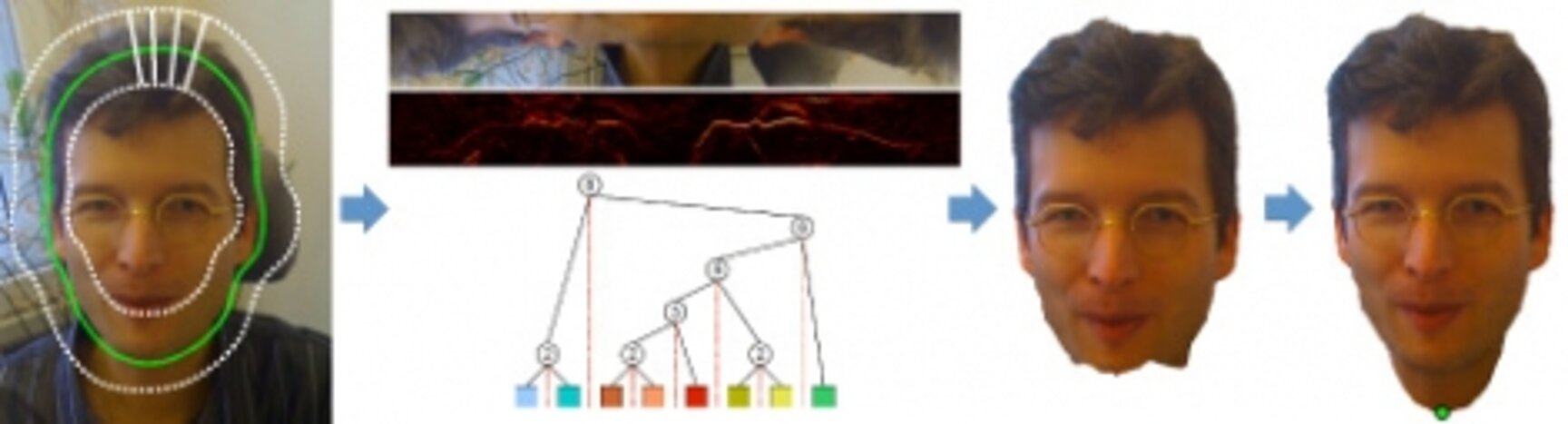

Using a standard Adaboost eye detector, the image is transformed into a 2D scale and rotation normalized polar reference frame for all subsequent processing. Besides geometric normalization, this simplifies the head contour search to essentially a 1D problem, as the boundary may be approximated by a one-dimensional vector of polar radii.

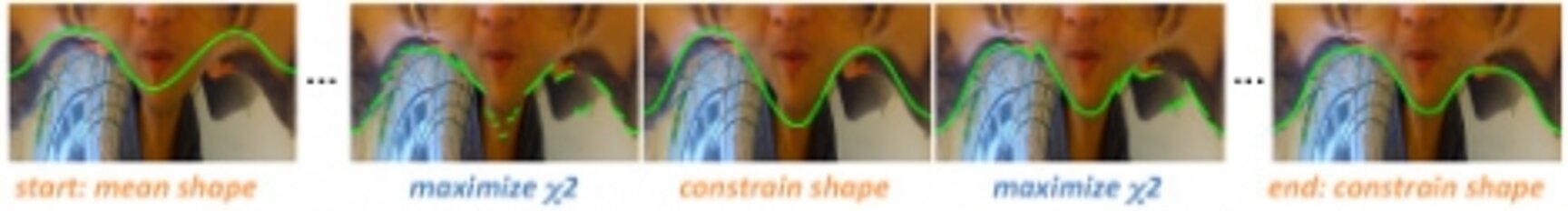

A parametric PCA head shape model, learned offline from a large database of manually segmented images, is used to guide the rough boundary fitting stage. The shape model provides statistically safe fore- and background regions in the image, which are used to train Gaussian Mixture color models and compute foreground posterior probabilities in the image. An iterative optimization procedure maximizes the distance of foreground probabilities along both sides of the boundary, while at the same time constraining the contour to a plausible head shape using the PCA shape model.

The rough cutout from the previous stage is locally refined with a boundary based algorithm called "Cluster Cutting". Cluster Cutting uses a cost function derived from clustering pixels along the normal of the initial segmentation path with a tree-building algorithm to fit the initial contour to the local boundaries in the image. An interactive version of the same algorithm is used in an interactive refinement stage, where the user can apply precise corrections with a minimum number of mouse clicks.

Publications

D. C. Schneider, B. Prestele, P. Eisert

Precise Head Segmentation on Arbitrary Backgrounds, IEEE International Conference on Image Processing (ICIP), pp. 2381 - 2384, Cairo, November 2009.