Data-driven methods in video coding

A key task of research in video coding is to find redundancies in typical video signals and to build compression tools that exploit these redundancies. On the other hand, in the field of machine learning, powerful methods to discover hidden patterns in large sets of data were developed. In our research, we try to apply some of these methods for a design of new video coding technologies.

Neural-Network based intra prediction modes

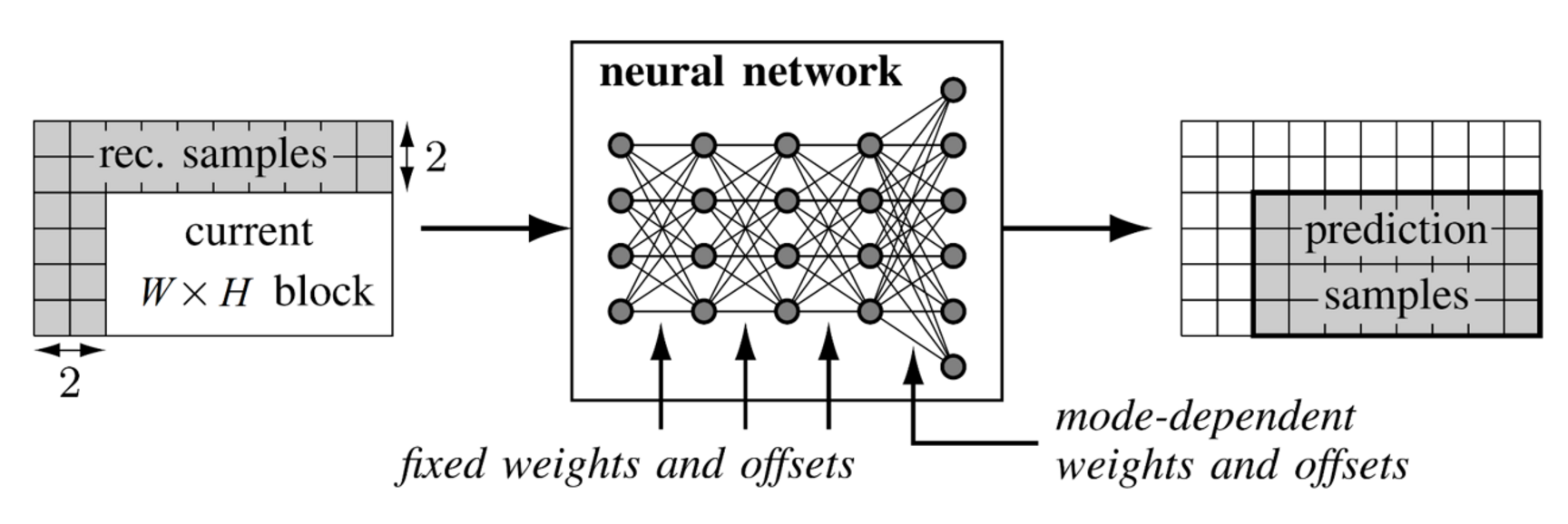

For our response to the Call for Proposals on new video compression technologies, we developed intra prediction modes that were based on neural networks. These intra prediction modes yield significant coding gain over state of the art video compression technologies. The parameters of the neural networks were designed by a training algorithm that tries to model some of the key properties of a modern hybrid video codec like the splitting into variable block-sizes and the transform-coefficient coding of prediction residuals. Several modes were trained for each block-size. For the signalling of the mode, a second neural network was designed whose output is a conditional probability distribution over all modes.

We designed several variants of the neural-network based intra prediction modes that represent different operational points in terms of the complexity-compression gain trade-off. The key idea is to define fixed sparsity patterns in the transform domain of the in- and output of the prediction modes. In this way, the number of parameters as well as the number of multiplications needed for the neural-network based predictions can be significantly reduced.

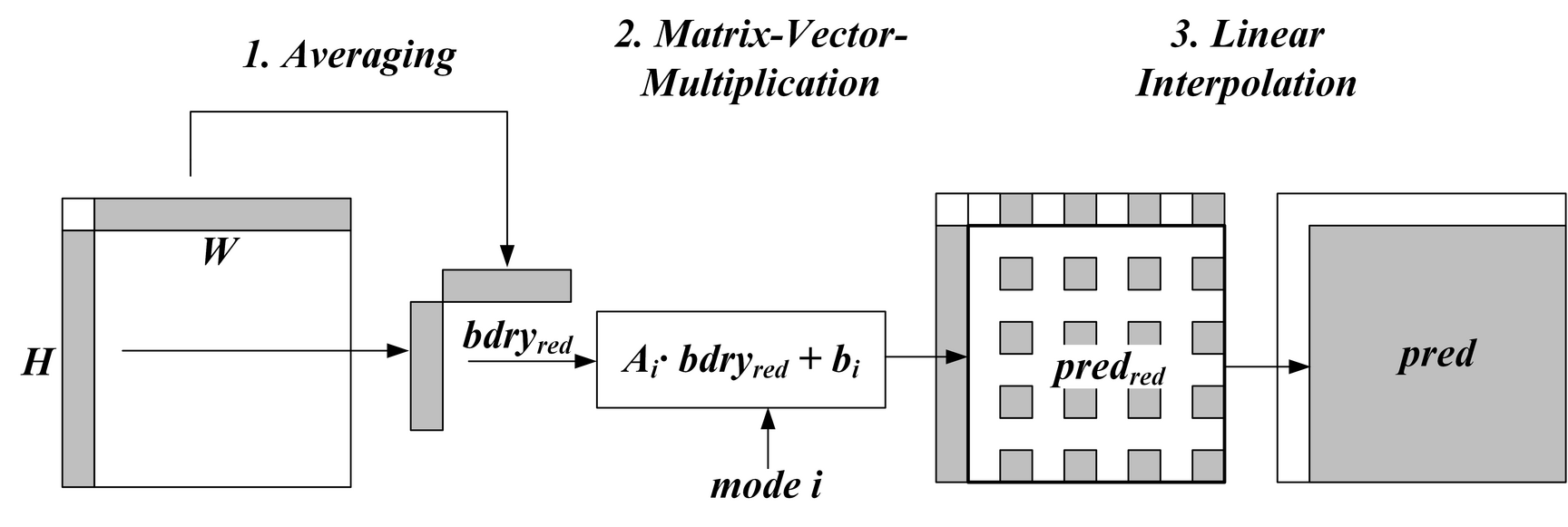

One variant of this approach, called matrix-based intra prediction (MIP) is part of the current working draft of the VVC standard. Here, the prediction signal is generated in three steps which are also illustrated in the figure below. First, the input of the prediction is downsampled. Then, a matrix-vector multiplication is applied to this input. Finally, he result is upsampled. The parameters of the involved matrices are trained with an algorithm similar to the training of the neural-network based predictors. MIP has a small memory requirement and does not increase the number of multiplications in comparison to existing intra prediction modes.

Adaptive transform selection

A central method of modern video codecs is to transform prediction residuals in order to achieve energy compaction. Video codecs like HEVC use the Discrete Cosine Transform (DCT-II) and the Discrete Sine Transform (DST-VII) as transforms. In recent developments of video codecs, transforms which are derived as KLTs from large sets of training data are supported. In order to reduce the complexity, these transforms are typically used as secondary transforms which only operate on low frequencies. For our response to the Call for Proposals, we designed mode-dependent secondary transforms for the coding of intra-prediction residuals. The coupling of these transforms with primary transforms is mode dependent as well. In this way, five different transforms are possible for each intra-prediction mode.

References

- J. Pfaff, H. Schwarz, D. Marpe, B. Bross, S. De-Luxán-Hernández, P. Helle, C. R. Helmrich, T. Hinz, W. Q. Lim, J. Ma, T. Nguyen, J. Rasch, M. Schäfer, M. Siekmann, G. Venugopal, A. Wieckowski, M. Winken, and T. Wiegand. Video Compression Using Generalized Binary Partitioning and Advanced Techniques for Prediction and Transform Coding. IEEE Transactions on Circuits and Systems For Video Technology, 2019.

- J. Pfaff, P. Helle, D. Maniry, S. Kaltenstadler, B. Stallenberger, P. Merkle, M. Siekmann, H. Schwarz, D. Marpe, and T. Wiegand, “Intra Prediction Modes based on Neural Networks,” doc. JVET-J0037, San Diego, Apr. 2018.

- J. Pfaff, P. Helle, D. Maniry, S. Kaltenstadler, W. Samek, H. Schwarz, D. Marpe, and T. Wiegand, “Neural network based intra prediction for video coding,” in Proc. SPIE Applic. of Digital Image Process. XLI, vol. 10752, Sep. 2018.

- P. Helle, J. Pfaff, M. Schäfer, R. Rischke, H. Schwarz, D. Marpe, and T. Wiegand, “Intra Picture Prediction for Video Coding with Neural Networks,” in Proc. IEEE Data Compression Conf. (DCC), Snowbird, Mar. 2019.