Intelligent Total Body Scanner

April 2021 – March 2025

This project has received funding from the European Union's Horizon 2020 research and innovation programme under grant agreement No. 965221.

Skin cancer is the most common human malignancy and its incidence has been increasing in the last decade. Within the general category of skin cancers, melanoma constitutes the main cause of death. Fortunately, melanoma may be cured if treated at an early stage.

However, early-stage melanoma detection is not easy even for expert dermatologists, and due to the labor, time and cost intensive state-of-the-art process of manually screening each patient using hand held dermoscopes, only a small fraction of the skin area can be covered with reasonable expenditure of resources. The risk of missing a melanoma therefore remains significant.

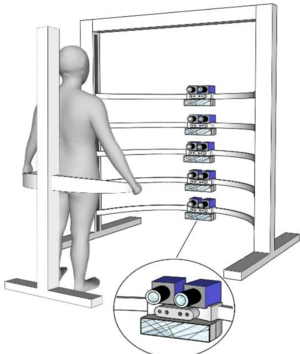

The iToBoS research project, funded by European Union's Horizon 2020 reasearch programme, will develop a novel diagnostic tool in for of an AI-driven total body scanner combining different sources of patient data, to significantly speed up the melanoma screening process, and minimize the associated healthcare costs, while providing transparent feedback to expert clinitians about the AI's diagnoses. The combination of all this personalized information will result in an accurate, detailed and structured assessment of the pigmented skin lesions of the patient.

Project Partners

- Universitat de Girona

- Optotune AG

- IBM Israel - Science and Technology Ltd.

- Robert Bosch España SA

- BARCO NV

- National Technical University of Athens

- Leibniz Universität Hannover

- Fundació Clínic per a la Recerca Biomèdica

- RICOH Spain IT services SLU

- Trilateral Research Limited

- Università degli Studi di Trieste

- Coronis Computing SL

- Torus Actions SAS

- V7 Ltd.

- Isahit

- University of Queensland

- Magyar Tudományos Akadémia Számít. és Automatizálási Kutatóintézet

- Melanoma Patient Network Europe

Publications

| [1] | Christopher J. Anders, Leander Weber, David Neumann, Wojciech Samek, Klaus-Robert Müller, and Sebastian Lapuschkin. “Finding and removing Clever Hans: Using explanation methods to debug and improve deep models”. In: Information Fusion 77 (2022), pp. 261-295. ISSN: 1566-2535. DOI: https://doi.org/10.1016/j.inffus.2021.07.015. URL: https://www.sciencedirect.com/science/article/pii/S1566253521001573. |

| [2] | Leila Arras, Ahmed Osman, and Wojciech Samek. “CLEVR-XAI: A benchmark dataset for the ground truth evaluation of neural network explanations”. In: Information Fusion 81 (2022), pp. 14-40. ISSN: 1566-2535. DOI: https://doi.org/10.1016/j.inffus.2021.11.008. URL: https://www.sciencedirect.com/science/article/pii/S1566253521002335. |

| [3] | Sami Ede, Serop Baghdadlian, Leander Weber, An Nguyen, Dario Zanca, Wojciech Samek, and Sebastian Lapuschkin. "Explain to Not Forget: Defending Against Catastrophic Forgetting with XAI". In: Machine Learning and Knowledge Extraction. Ed. by Andreas Holzinger, Peter Kieseberg, A. Min Tjoa, and Edgar Weippl. Cham: Springer International Publishing, 2022, pp. 1-18. ISBN: 978-3-031-14463-9. URL: https://link.springer.com/chapter/10.1007/978-3-031-14463-9_1. |

| [4] | Anna Hedström, Leander Weber, Daniel Krakowczyk, Dilyara Bareeva, Franz Motzkus, Wojciech Samek, Sebastian Lapuschkin, and Marina M.-C. Höhne. "Quantus: An Explainable AI Toolkit for Responsible Evaluation of Neural Network Explanations and Beyond". In: Journal of Machine Learning Research 24.34 (2023), pp. 1-11. URL: http://jmlr.org/papers/v24/22-0142.html. |

| [5] | Franz Motzkus, Leander Weber, and Sebastian Lapuschkin. "Measurably Stronger Explanation Reliability Via Model Canonization". In: 2022 IEEE International Conference on Image Processing (ICIP). 2022, pp. 516-520. DOI: 10.1109/ICIP46576.2022.9897282.. URL: https://ieeexplore.ieee.org/document/9897282. |

| [6] | Jiamei Sun, Sebastian Lapuschkin, Wojciech Samek, and Alexander Binder. “Explain and improve: LRP-inference fine-tuning for image captioning models”. In: Information Fusion 77 (2022), pp. 233-246. ISSN: 1566-2535. DOI: 10.1016/j.inffus.2021.07.008. URL: https://www.sciencedirect.com/science/article/pii/S1566253521001494. |

| [7] | Frederik Pahde, Maximilian Dreyer, Wojciech Samek, and Sebastian Lapuschkin. “Reveal to Revise. An Explainable AI Life Cycle for Iterative Bias Correction of Deep Models”. In: Medical Image Computing and Computer Assisted Intervention – MICCAI 2023. 26th International Conference, Vancouver, BC, Canada, October 8–12, 2023, Proceedings, Part I. 26th International Conference on Medical Image Computing and Computer Assisted Intervention. MICCAI (Vancouver Convention Centre, Vancouver, British Columbia, Canada, Oct. 8–12, 2023). Ed. by Hayit Greenspan, Anant Madabhushi, Parvin Mousavi, Septimiu Salcudean, James Duncan, Tanveer Syeda-Mahmood, and Russell Taylor. The Medical Image Computing and Computer Assisted Intervention Society (MICCAI). Gewerbestraße 11, 6330 Cham, Switzerland: Springer Nature Switzerland, Oct. 1, 2023, pp. 596–606. ISBN: 978-3-031-43895-0. DOI: 0.1007/978- 3- 031-43895-0_56. |

| [8] | Maximilian Dreyer, Frederik Pahde, Christopher J. Anders, Wojciech Samek, and Sebastian Lapuschkin. “From Hope to Safety. Unlearning Biases of Deep Models via Gradient Penalization in Latent Space”. In: Proceedings of the AAAI Conference on Artificial Intelligence. The 38th Annual AAAI Conference on Artificial Intelligence. AAAI (Vancouver Convention Centre – West Building, Canada Pl, Vancouver, BC V6C 3G3, Feb. 20–27, 2024). Vol. 38. 19. Association for the Advancement of Artificial Intelligence. 1101 Pennsylvania Ave, NW, Suite 300, Washington, DC 20004, United States of America: AAAI Press, Mar. 24, 2024, pp. 21046–21046. ISBN: 978-1-57735-887-9. DOI: 10.1609/aaai.v38i19.30096. |