AMAVI

Acoustical Modeling for Auralizations in Virtual Infrastructure

November 2022 - October 2024

Objectives

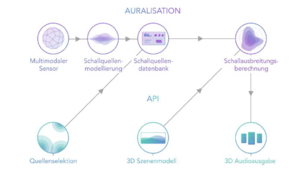

Under simplified free-field conditions, a hybrid method for sound propagation calculation is to be developed on GPUs. Additionally, since suitable acoustic models for describing complex sound sources for real-time auralizations do not yet exist, a database format for moving sources with arbitrary radiation behavior and partial sound source composition is being developed.

To reduce the time spent on manual metadata evaluation, an automated evaluation based on AI techniques is being developed.

Approach

To achieve the mentioned objectives, a multimodal detection of sound sources is intended. This involves assembling a sophisticated sensor setup for train measurements, where audio measurements synergistically take place with other projects using a microphone array, such as additional video data, weather data, or geodata. Algorithms for automated evaluation are also being developed to detect train type, model, and speed from videos, for instance.

Furthermore, complex sound source models are to be developed with a focus on accurately describing partial sound sources. This includes considering individual radiation patterns, audio loops for flexible scene integration, assembling different train configurations, automated integration of metadata, and investigating algorithms for interpolation between different speeds.

In addition, complex sound propagation models are also under development. The optimization of algorithms for GPU computation is central here. A promising approach involves geometric sound propagation methods (such as Ray-Tracing, etc.), implementing the algorithms in CUDA using the Unreal Engine, optimizing runtime by separating visual and acoustic scene models, and assessing real-time capability through targeted simplification.

Prospects

The prospects for exploitation involve strengthening the exploitation strategy of EAV-Infra and licensing the Auralization plugin for immersive VR/XR applications (gaming, e-learning, studies, teleoperation, etc.).

Strategic potentials lie in tapping into additional industries that can benefit from immersive 3D audio solutions and expanding expertise in AI-based data processing for multisensory systems.